- Published on

Network Flows - Part 6

- Authors

- Name

- Till Heller

In the previous parts, we focused on pushing as much flow as possible from source to sink. But in many real-world scenarios, not all routes are created equal. Shipping goods through one city might be cheap, while routing them through another might be expensive. When we care about both how much we send and what it costs, we need the minimum-cost flow problem — a beautiful generalization that ties together maximum flow, shortest paths, and linear programming.

The Problem

Let's extend our flow networks with a new ingredient: a cost for each arc.

Definition 12. A minimum-cost flow network is a tuple , where is a flow network as before, and is a cost function assigning a cost to each arc . The cost represents the cost per unit of flow on arc .

Definition 13. The cost of a flow is .

Now we can state the problem precisely.

Minimum-Cost Maximum Flow Problem. Given a minimum-cost flow network , find a maximum flow that minimizes among all maximum flows.

Think of the supply chain from Part 1: we want to transport as much product as possible (maximum flow), but among all ways of doing so, we want the cheapest one (minimum cost). The two objectives are lexicographic — first maximize flow, then minimize cost.

There is also a more general formulation where we fix a desired flow value and ask for the cheapest flow of value exactly . The maximum-flow version is the special case where .

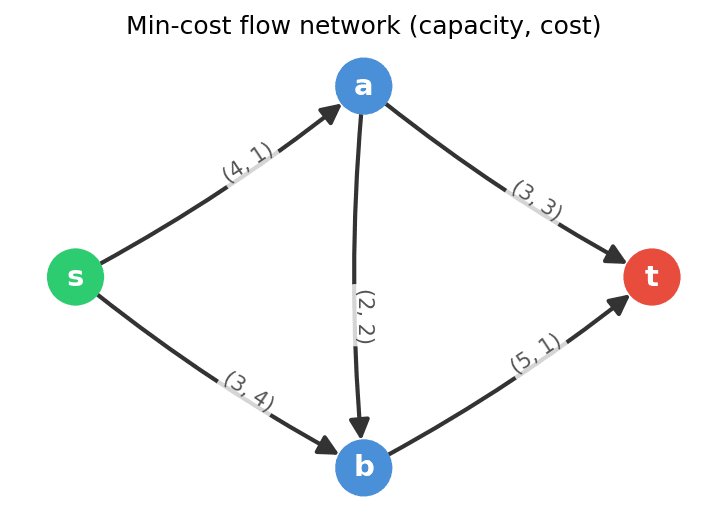

Let's set up a running example. Consider the network with vertices , arcs and capacities , , , , , and costs , , , , .

The cheapest route from to goes through and then directly to (cost per unit), while the route through costs more per unit () but has more capacity. The challenge is finding the right balance.

Residual Costs

In Part 3, we introduced the residual graph to capture where more flow can be sent. For minimum-cost flows, we need to extend the residual graph with costs.

Definition 14. Given a flow in a minimum-cost flow network, the residual cost of an arc in the residual graph is defined as:

- For a forward arc : . Sending more flow along this arc costs per unit.

- For a backward arc : . Cancelling flow on saves per unit, so the residual cost is negative.

The backward arc costs are the key insight. When we "undo" flow on an arc, we recover the cost we previously paid. This means that a negative-cost cycle in the residual graph represents a way to reroute existing flow to reduce the total cost without changing the flow value — we push flow around the cycle, and the negative total cost means we save money.

Negative Cycle Optimality

This observation leads to a clean characterization of optimal flows.

Theorem 8 (Negative Cycle Optimality). A feasible flow of a given value is minimum-cost if and only if the residual graph contains no negative-cost directed cycle.

Proof. For the "only if" direction: suppose contains a directed cycle with negative total cost . Let be the minimum residual capacity along . We can push units of flow around . Since is a cycle, the flow value doesn't change (whatever enters a vertex also leaves it), but the cost decreases by . So was not minimum-cost.

For the "if" direction, the argument is more involved and relies on linear programming duality. The idea is that if no negative-cost cycle exists, we can construct a set of vertex potentials (dual variables) that certify optimality via complementary slackness1. We omit the full proof here.

The Cycle-Canceling Algorithm

The negative cycle optimality condition immediately suggests an algorithm: start with any maximum flow and repeatedly cancel negative-cost cycles until none remain.

Cycle-Canceling Algorithm:

- Find a maximum flow (using Edmonds-Karp or any max-flow algorithm).

- Build the residual graph with residual costs.

- Search for a negative-cost directed cycle in .

- If no negative cycle exists, stop — is a minimum-cost maximum flow.

- Otherwise, compute and push units around .

- Go to step 2.

Finding a negative cycle can be done using the Bellman-Ford algorithm, which detects negative cycles in time.

Observation 3. Each iteration of the cycle-canceling algorithm strictly decreases the cost of the flow while preserving the flow value.

For integer capacities and costs, the algorithm terminates because the cost is an integer that decreases by at least in each iteration and is bounded below. However, the number of iterations can be large — proportional to the total cost, which may be exponential in the input size. More sophisticated cycle selection rules (such as always canceling the minimum mean cost cycle) lead to polynomial-time variants, but we won't go into those details here.

The Successive Shortest Paths Algorithm

There is an alternative approach that builds the minimum-cost maximum flow from scratch, rather than first finding any maximum flow and then repairing it. The idea is simple: send flow along the cheapest possible route, one unit at a time.

Successive Shortest Paths Algorithm:

- Initialize for every arc .

- Build the residual graph with residual costs.

- Find a shortest path (minimum total cost) from to in using the residual costs as edge weights.

- If no --path exists, stop — is a minimum-cost maximum flow.

- Otherwise, let be the shortest path and .

- Push units of flow along (same as in Ford-Fulkerson).

- Go to step 2.

This algorithm augments flow along the cheapest augmenting path instead of the shortest (fewest arcs) augmenting path. Each augmentation simultaneously increases the flow value and ensures minimum cost for the current flow value.

Theorem 9. The successive shortest paths algorithm maintains the invariant that after each augmentation, the current flow is a minimum-cost flow among all flows of the same value.

Proof sketch. Initially, the zero flow is trivially minimum-cost (it's the only flow of value ). Suppose the current flow with value is minimum-cost. We augment along a shortest path in the residual graph. If the resulting flow were not minimum-cost for value , there would be a cheaper flow of the same value. The difference between and can be decomposed into cycles in the residual graph of . But the shortest-path augmentation guarantees that no negative-cost cycle exists in 2 — a contradiction.

There is one subtlety: the residual graph may contain negative-cost arcs (the backward arcs), so we cannot directly use Dijkstra's algorithm for the shortest-path computation. However, we can use Bellman-Ford ( per iteration), or — more cleverly — maintain vertex potentials to transform all residual costs to non-negative values, enabling Dijkstra's algorithm ( per iteration with a Fibonacci heap).

With integer capacities, each augmentation sends at least unit, so the algorithm performs at most augmentations. Using Bellman-Ford, the total running time is . With the potential-based Dijkstra approach, each iteration costs , giving .

A Worked Example

Let's trace the successive shortest paths algorithm on our running example: , , , , , and costs , , , , .

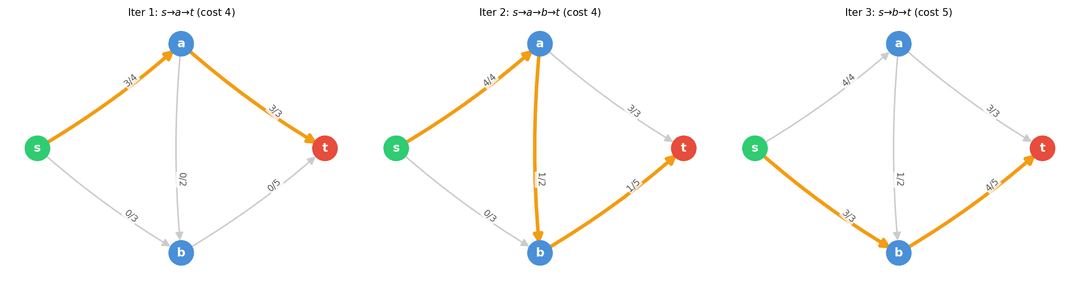

Iteration 1. We find the shortest (cheapest) path from to . The candidates:

- : cost , bottleneck .

- : cost , bottleneck .

- : cost , bottleneck .

Both and have cost . Let's pick with bottleneck . We push units: , . Flow value: . Cost: .

Iteration 2. Residual graph: forward arcs with capacity and cost , with capacity and cost , with capacity and cost , with capacity and cost ; backward arcs with capacity and cost , with capacity and cost .

Cheapest path from to :

- : cost , bottleneck .

- : cost , bottleneck .

We pick with bottleneck . Push unit: , , . Flow value: . Cost: .

Iteration 3. Now is saturated. Residual forward arcs from : only with capacity and cost .

Cheapest path: with cost , bottleneck . Push units: , . Flow value: . Cost: .

Iteration 4. Residual forward arcs from : none (both and are saturated). No --path exists. The algorithm terminates.

The minimum-cost maximum flow has value and cost . The final flow is , , , , .

Notice how the algorithm prioritized the cheap route through before using the more expensive route through . It also used the intermediate arc to squeeze one extra unit through the cheaper part of the network. This is the successive shortest paths algorithm at work: each unit of flow is sent along the currently cheapest route.

Looking Ahead

With minimum-cost flows, we've reached the most general problem in this series. In the next and final part, we'll explore applications of minimum-cost flows — from transportation and assignment problems to minimum-weight matchings — and reflect on how the entire network flow framework connects the topics we've studied.

Footnotes

The connection to linear programming runs deep. The minimum-cost flow problem is a linear program, and the absence of negative residual cycles corresponds to dual feasibility. This duality is also the reason minimum-cost flow algorithms are closely related to shortest-path algorithms. ↩

This is the key insight: augmenting along a shortest path in the residual graph preserves the negative-cycle-free property. The proof uses the fact that shortest-path distances serve as vertex potentials that make all residual costs non-negative — the same reduced-cost technique used in Johnson's algorithm for all-pairs shortest paths. ↩